|

||||||||||||||||||||

|

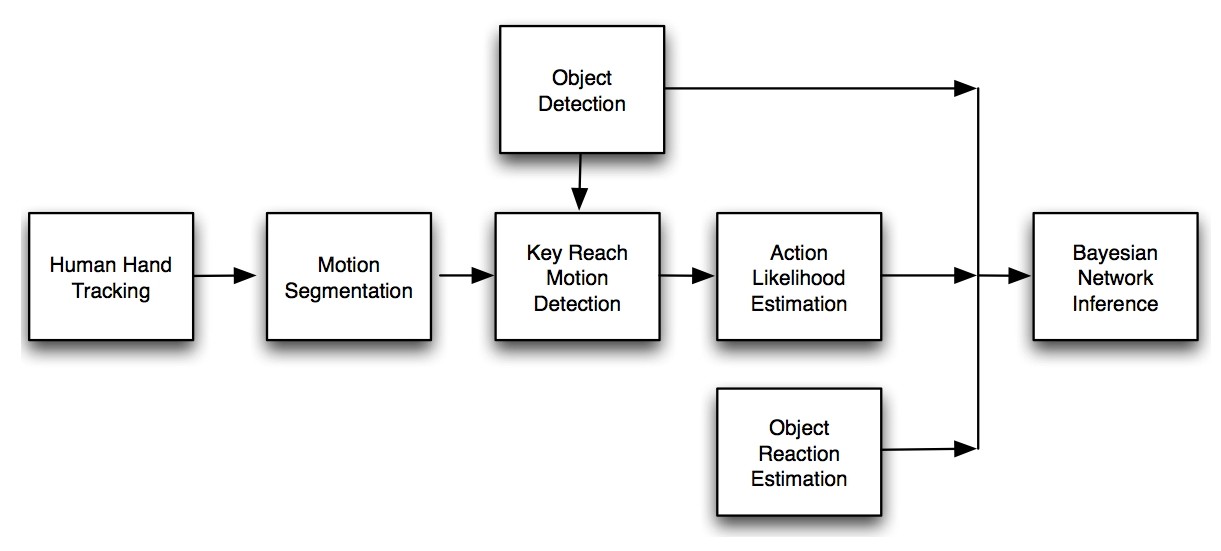

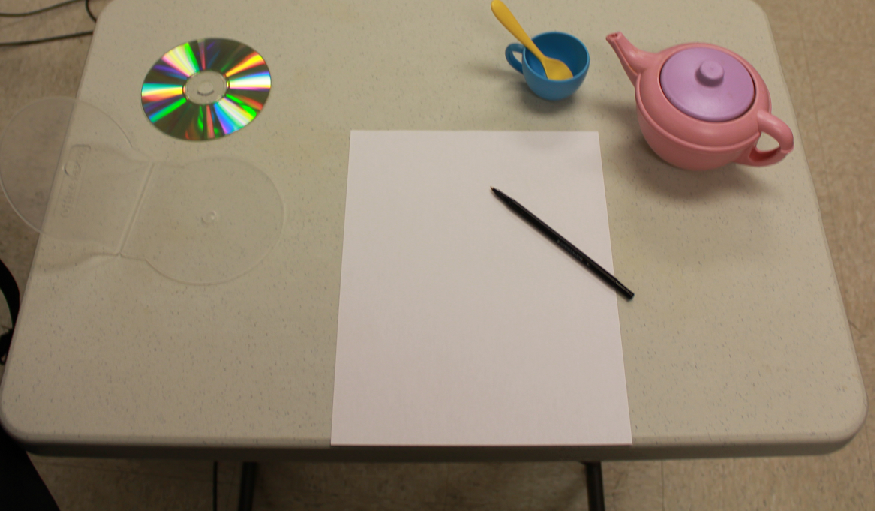

This project develops a novel object-object affordance learning approach that enables intelligent robots to learn the interactive functionalities of objects from human demonstrations in everyday environments. Instead of considering a single object, we model the interactive motions between paired objects in a human-object-object way. The innate interaction-affordance knowledge of the paired objects are learned from a labeled training data set that contains a set of relative motions of the paired objects, human actions, and object labels. The learned knowledge is represented with a Bayesian Network, and the network can be used to improve the recognition reliability of both objects and human actions and to generate proper manipulation motion for a robot if a pair of objects is recognized. The framework is illustrated in Figure 1. In daily life, when we are performing tasks, we pay most of our attention to object states or object interactions. For example, when we are writing on paper with a pen, we focus our attention on the pen point, which is the interaction part between the pen and the paper. Moreover, object interaction can directly reveal an object's functions. For instance, when we put a book into a schoolbag, the putting motion tells us that the schoolbag is a container for books. There are endless interactive examples with paired objects in our daily lives. Figure 2 shows several objects on a table that have an inter-object relationship: a CD and a CD case, a pen and a piece of paper, a spoon and a cup, and a cup and a teapot. In this paper, we attempt to capitalize on the strong relationship between paired objects and interactive motion by building an object relation model and associating it with a human action model in the human-object-object way to characterize inter-object affordance.

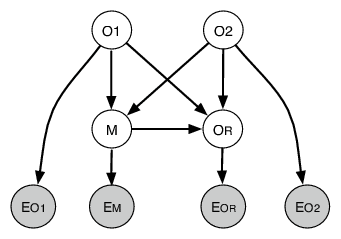

We simplify the functional-related objects with piece-wise functional-related paired objects and model the inter-objet manipulation motions as the inter-object affordance and associate it with paired-object recognition. The goal is to allow robots to learn inter-object affordance motions from humans and then trigger the robot to generate the correct manipulation motion when observing the paired objects. To model the functional relationship of the paired objects and their relationship with the manipulation motion, we develop a graphical model that connects the paired objects and the manipulation motions. The graphical model intuitively represents the functional connectivity of the objects, such as a teapot and a cup or a book and a schoolbag, and extends that connectivity to manipulation motions. A Bayesian Network is employed to model these relationships, in which the paired objects, the interact action, and the consequence of the object interaction are included as a node in the graphical model (Figure 3).

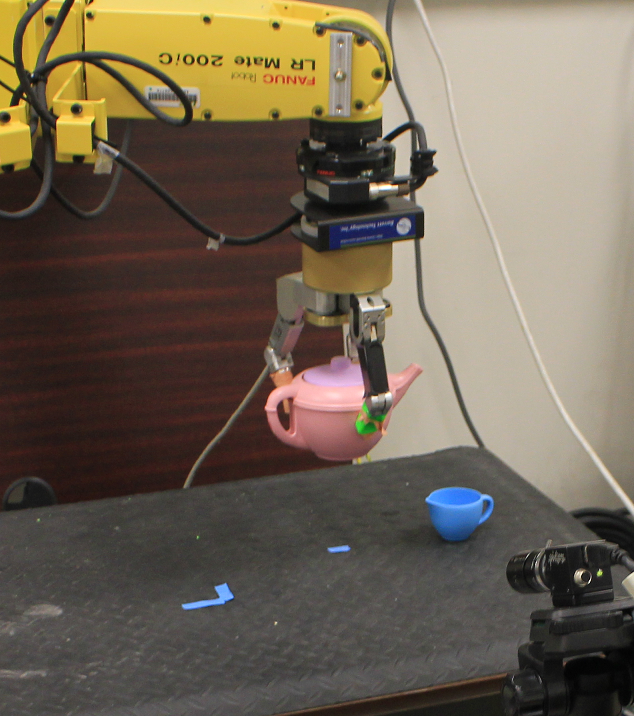

The interactive motions associated with the paired objects can be learned as the affordance in interaction with statistical models such as Gaussian mixture models. The learned motion can then be directly used to control a robot to perform the proper manipulation motion when the robot sees the paired objects. We have developed an image-based visual servoing approach that used the learned motion features in the interaction affordance as the control goals to control the robot to perform the manipulation task instead of manually programming the motion. The approach has been tested with the setup as shown in Figure 4.

Participants:

Collaborators

Publication: This project is supported by USF startup grant |

|||||||||||||||||||